Search optimization has fundamentally transformed. For years, SEO meant keywords and text. But as AI reshapes discovery, that text-centric approach is rapidly becoming obsolete. The future belongs to multimodal AI, systems that simultaneously process text, images, video, and audio to understand content holistically.

The numbers are compelling. The multimodal AI market was valued at $1.73 billion in 2024 and is projected to reach $10.89 billion by 2030, growing at 36.8% CAGR. Meanwhile, video content accounts for 82% of all internet traffic, and videos are 50 times more likely to rank on Google’s first page than text-only content. (Source: Grandview Research)

For businesses navigating AI Search Optimization (AISO), the message is clear: if you’re optimizing only for text, you’re invisible to the systems that increasingly determine what users see.

Multimodal AI prefers diverse AI content formats, and fundamentally requires them to accurately understand context, relevance, and value. The companies mastering unified multimedia optimization are getting better results. They’re also playing an entirely different game.

How LLMs and Multimodal Models Consume Various Media

Understanding how AI systems process different content formats is essential for effective optimization. Multimodal AI doesn’t look at text, images, video, and audio separately, it integrates them to create richer, contextual understanding.

Text processing remains foundational

Even in multimodal AI systems, text provides crucial context. LLMs extract semantic meaning, identify entities, and establish topical relevance through written content. Keywords, headers, and descriptions create the narrative framework that helps AI understand what other content elements represent.

Image understanding goes beyond pixels

When multimodal AI processes images, it identifies objects, recognizes faces, reads text within images, understands spatial relationships, and interprets context.

AI systems increasingly favor images with contextual richness, those that tell stories rather than just display objects.

Video combines temporal and spatial intelligence

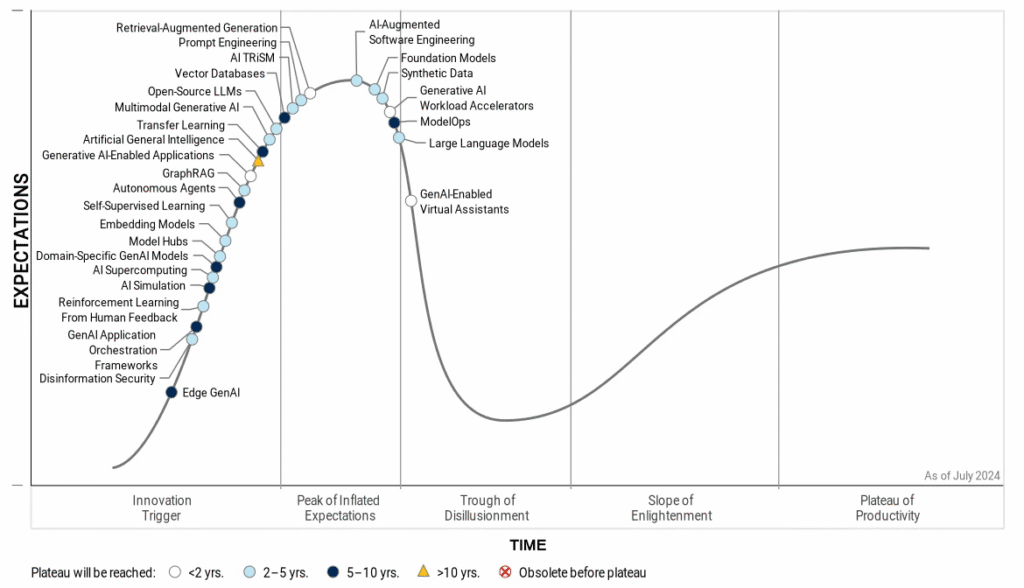

Video processing adds the dimension of time. Multimodal models analyze frame-by-frame, track movement, identify scene transitions, extract audio, and understand narrative flow. Gartner predicts that 40% of generative AI solutions will be multimodal by 2027, with video processing capabilities becoming standard. (Source: Gartner)

Audio adds emotional and tonal context

Voice processing allows AI to detect emotion in speech, identify speakers, transcribe spoken content, and understand emphasis.

The audio data segment is expected to grow at 11.71% CAGR through 2034, reflecting increasing sophistication. (Source: Proficient Marketing Insights)

Cross-modal connections create deeper understanding

The real power emerges when AI connects information across formats. A cooking video with spoken recipe, on-screen text, and visual demonstration provides layered information no single format could deliver. AI systems identifying these connections better determine relevance, authenticity, and value, key factors in ranking algorithms.

This makes image + video SEO critical for visibility.

Strategies to Mix Formats to Signal Relevance

Creating multimodal content isn’t just about including different media types, it’s about strategically combining them to maximize AI comprehension and user value.

Start with intentional content architecture

Before creating content, map out how different formats will work together. Ask: What is the core message? Which format communicates which aspect best?

Text might provide overview, diagrams illustrate steps, video demonstrates execution, and audio offers commentary.

Each format serves a specific purpose rather than duplicating information.

Use text to provide searchable context for visual media

Every image, video, and audio file should be surrounded by relevant text: descriptive filenames, comprehensive alt text, detailed captions, contextual body copy, and strategic keywords. When you embed a video about email marketing, surrounding text should discuss email marketing concepts, not just say “watch this video.”

Create hierarchical information structures

Effective multimodal content follows patterns: text headlines establish topics, images provide visual entry points, video offers deep dives, audio extends engagement, and text summaries reinforce takeaways.

This hierarchy allows users and AI systems to engage at different depths.

Leverage video thumbnails strategically

Thumbnails with human faces improve click-through rates by 28%. Beyond CTR, thumbnails serve as visual summaries helping AI categorize content. Create custom thumbnails with clear text overlays, relevant imagery, and consistent branding. (Source: zebracat AI)

Design for accessibility, which aids AI comprehension

Closed captions benefit hearing-impaired users and AI transcription systems. Descriptive alt text helps visually impaired users and image recognition algorithms. Videos are 50x more likely to show up on the first page of Google than a text page. (Source: rev)

That’s partially because they have a 41% higher click-through rate than basic text pages, demonstrating how accessibility and optimization align with unified multimedia optimization principles.

Create content clusters with multimodal variety

Instead of isolated pieces, develop content clusters where different AI content formats explore related topics. Your pillar content might be a text guide, with supporting tutorial videos, explanatory images, case study podcasts, and data visualizations.

This signals topical depth to multimodal AI systems.

Structured Metadata, Alt-Text, and Transcripts

| ASPECT | DESCRIPTION | BEST PRACTICES |

| Alt-text as AI interpretation guides | Descriptive, specific alt-text helps AI understand images by explaining what the image contributes. | Use relevant keywords naturally; avoid generic phrases like “image of”; e.g., “marketing team reviewing quarterly campaign performance data on whiteboard.” |

| Video transcripts | Transcripts provide searchable text for AI, improve keyword indexing, accessibility, and internal linking opportunities. | Upload complete, edited transcripts instead of auto-generated captions. |

| Schema markup for rich media content | Use schema types like VideoObject, ImageObject, AudioObject, HowTo, or FAQ to enable rich results and improve categorization. | Signals content quality to search algorithms and boosts image/video SEO. |

| Filename conventions | Rename media files descriptively with target keywords to help AI understand content before processing. | Instead of generic names like “IMG_1234.jpg,” use descriptive names like “email-marketing-dashboard-analytics-screenshot.jpg.” |

| Metadata consistency across platforms | Maintain uniform metadata (keywords, titles, descriptions, categories, transcripts) across YouTube, websites, and social media. | Helps AI recognize, aggregate, and link multimodal content authority signals across platforms. |

Experiment Ideas and Case Studies

Experiment 1: Text-only versus multimodal performance

Create two versions of the same content:

- Version A: text article only.

- Version B: text plus custom images, embedded video, infographic, and audio version.

After 90 days, compare organic traffic, engagement time, conversions, and AI citation frequency.

Experiment 2: Generic versus optimized media metadata

Select 20 existing images or videos. For 10, implement comprehensive optimization: descriptive filenames, detailed alt-text with keywords, complete transcripts, schema markup, and contextual text.

Leave 10 with generic optimization. Track which generates more impressions in image/video search and appears in more rich results.

Case study: E-commerce product pages

Rocky Brands, a footwear retailer, used BrightEdge’s AI-powered SEO tools for keyword research, content optimization, and performance tracking.

Experiment 3: Podcast episode optimization

Implement full multimodal treatment for podcast episodes:

- Detailed show notes with timestamps

- Key quotes as graphics

- Video versions

- Complete transcripts with keywords

- Follow-up blog posts

- AudioObject schema.

The Path Forward

The shift toward multimodal AI is no longer optional. It is the current reality. As AI increasingly works across text, images, audio, and video, companies that succeed will optimize their presence across every content format, not just one.

This doesn’t mean abandoning text or creating video for video’s sake. It means thinking strategically about how different formats work together to create comprehensive experiences that both users and AI systems understand. The businesses winning in image + video SEO aren’t just adding media, they’re architecting multimodal ecosystems where every format enhances understanding.

Technical execution matters enormously. Alt-text, transcripts, schema markup, filenames, and metadata aren’t optional, they’re essential bridges between content and algorithmic comprehension. AI systems can’t fully understand content they can’t properly categorize.

Start where you are. Begin with high-traffic content. Add one additional format. Implement proper metadata. Measure results. Refine based on what works. Gradually expand your multimodal approach as you build capabilities.

The future of AISO is inherently multimodal. The question isn’t whether to adapt, but how quickly you can master unified multimedia optimization while competitors optimize text alone. Those who act now will establish algorithmic advantages that compound over time, becoming the cited sources and comprehensive answers that multimodal AI systems rely on.

And if you’re looking to amplify your AI visibility with multimodal content, book a call with ReSO now.