Leaders trust AI search (before even fully understanding how it works)

Search is undergoing its most fundamental shift since the early days of Google. LLM searches (users searching on ChatGPT, Perplexity, etc.) cannot be considered an edge case user acquisition channel anymore. They are shaping how people discover, evaluate, and trust information. While adoption and trust in AI search are accelerating, understanding of how visibility actually works inside these systems remains fragmented.

This report is built to close that gap. It draws on analysis of:

- 5,000+ real search prompts

- Across 100+ brands, from across industries

- Primary research from over 150+ marketing leaders

to move beyond opinion and examine how AI-driven search actually behaves and what that means for visibility going forward.

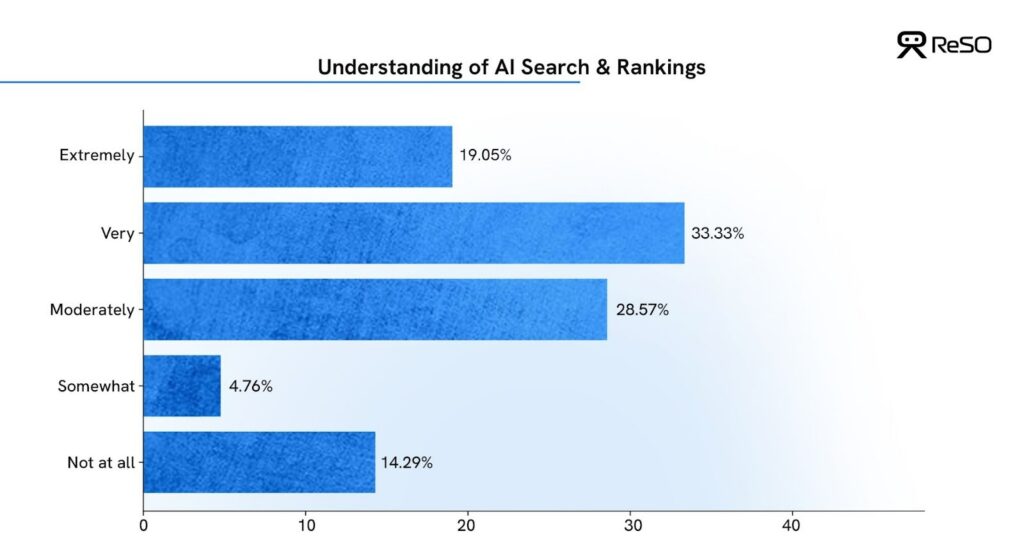

Awareness is high, but understanding is uneven

Almost 100% of marketing leaders say that AI search is an integral part of the buyer journey, but when asked how well they understand AI search and rankings, marketing leaders reveal that they are aware but not fully equipped.

- 52% of leaders say they have a decent or extremely strong understanding of how AI search works

- 33% admit their understanding is limited, or even minimal

This creates a visible gap:

Leaders recognise AI search as important, but nearly 1 in 2 are still navigating it without deep clarity on how visibility is actually determined.

30% leaders have already started investing in AI-driven SEO (GEO/AISO) via agencies & 2-3 different tools, but few understand how ranking even works. Or should it even be called “ranking” anymore.

Most of them are experimenting as the urgency to plan this channel has arrived faster than operational confidence.

Key takeaways

– AI search is an integral part of the buyer journey & heavily influences their decision.

– If your team does not know how AI visibility works, you’re operating blind.

– Marketing budget allocation should be done based on LLM mechanics, not market hype cycles.

The trust shift has already happened

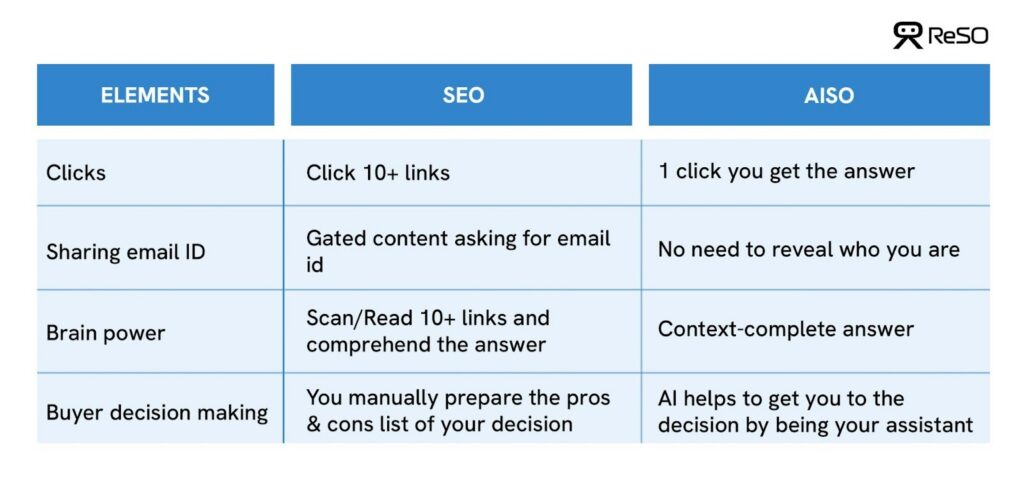

Trust is the prerequisite to behaviour change. And behaviour changes when effort reduces.

Every major platform/behavioural shift followed this pattern. Take Uber for reference – they didn’t create demand for taxis but reduced the effort of getting one. Fewer steps. Less uncertainty. Faster outcome. That reduction in friction permanently changed behaviour.

AI search is following the same trajectory.

Users no longer want to open 10 tabs, scan multiple articles, compare perspectives, and manually build conclusions. They want to set context, remove friction, and instantly get the answer they need.

Trust transfers when change is frictionless, and that’s what we are witnessing all around us.

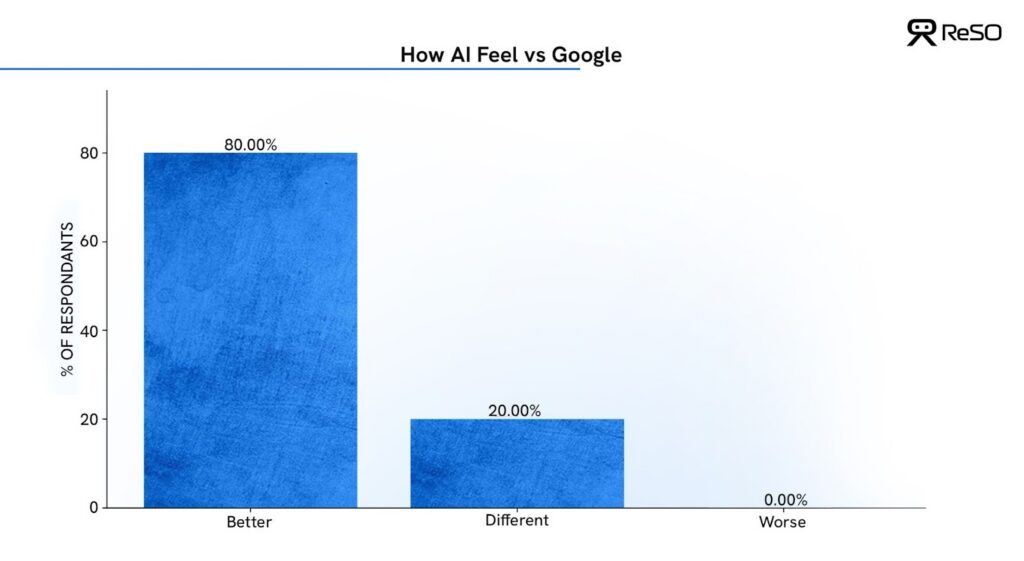

Perception shifts sharply when leaders compare AI-powered answers to traditional Google results.

- 80% say AI answers feel better – more specific and more contextual

- The remaining 20% say it depends on the query

This is a critical signal.

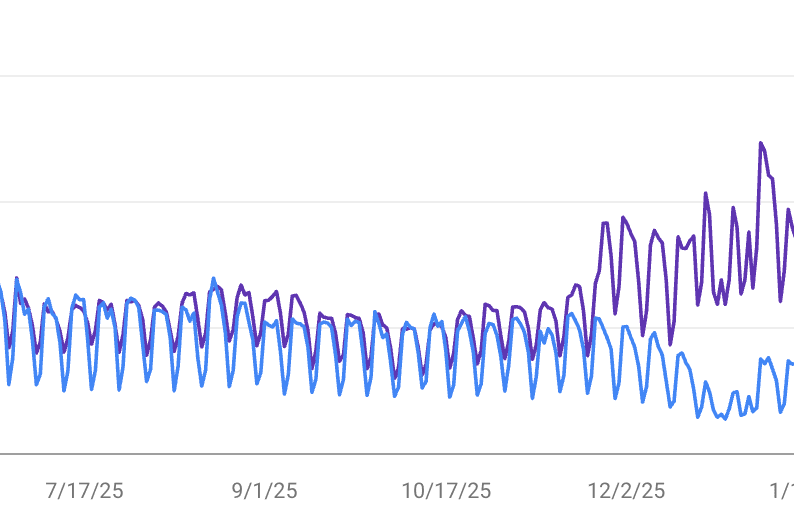

Once answers feel better, usage follows, and discovery patterns shift quietly but permanently. This is what’s resulting in what we know as the Crocodile Mouth effect – where impressions continue to rise, but zero-click search leads to a decline or stagnation in clicks.

Source: ReSO

Another important factor to consider- a large-scale study by Semrush on Google AI Overviews also shows that AI-generated answers often surface content that did not previously rank in the top organic results. Visibility is no longer determined only by position; it’s determined by the relevance of the synthesized answer for that user.

| Key takeaways – Gated education; essentially, any content behind lead gen forms actively works against AI visibility. – Your content should help AI finish the user’s thought & answer their query. – If a page requires a click to make sense, it’s useless in AI search. |

Leaders expect AI to change SEO, not replace it

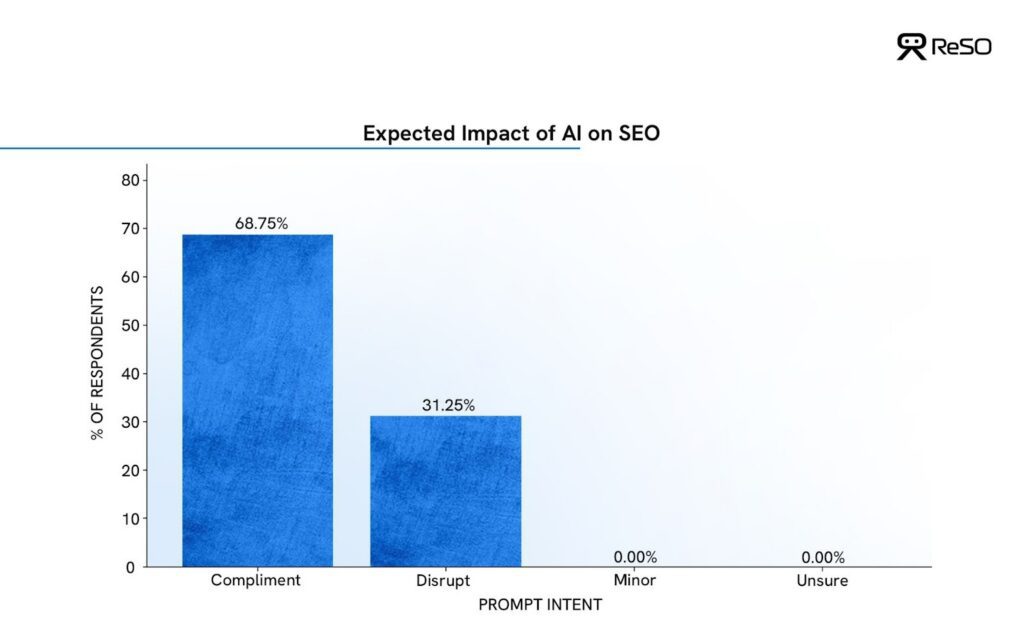

When asked how AI-generated search results will impact SEO:

- 100% of respondents expect a meaningful impact

- 69% believe AI will complement existing SEO strategies

- 31% expect significant disruption

- 0% believe the impact will be minor

This reframes the conversation.

AI is not perceived as the end of SEO, but it’s perceived as a redefinition of what good SEO, or rather “Search Optimization,” looks like.

The data shows a strong 2.2x preference for integration over replacement.

New models from Claude & ChatGPT are getting dropped almost every quarter, and Google is also periodically changing its algorithm. Everything that impacts AI search- the platform, the user behavior & the AI algorithm are all in constant flux.

Leaders aren’t preparing for an SEO reset; they’re preparing for an SEO evolution they don’t yet fully understand. And, this is exactly what we are here for!

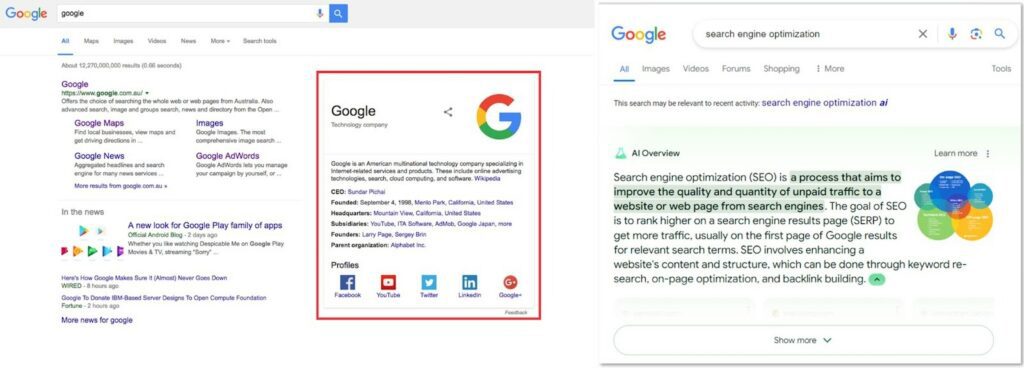

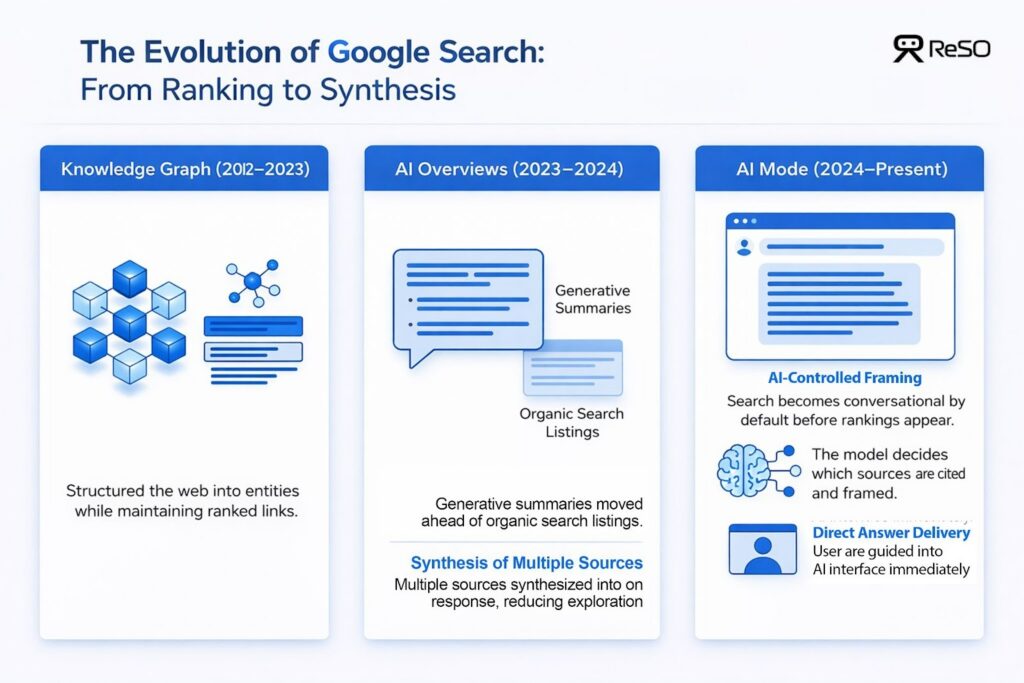

This massive transition is driven by Google and not just LLMs

AI-driven search is no longer confined to tools like ChatGPT or Perplexity. The most consequential shift is happening inside Google itself. AI Overviews have replaced Knowledge Graphs in most places, and the user is being directed to AI Mode before they even get to the 1st search result.

This didn’t happen all at once. What we’re seeing now is the outcome of a deliberate progression in how Google has reshaped search over time, moving from organizing information to summarizing it to fully mediating how answers are formed and delivered.

Google shifted from ranking pages to selecting sources.

Traditional search relied on:

- keyword matching

- ranked lists

- static knowledge panels

Google AI Overviews synthesise answers by:

- pulling from multiple sources

- blending editorial, educational, and third-party content

- prioritizing answer construction over link ordering

This is not an incremental algorithm update. This is an absolute change in the Google search.

As McKinsey & Company describes it, AI has become a new front door to the internet – reshaping how users discover, evaluate, and trust information before they ever reach a website. Half of consumers polled in their survey now intentionally seek out AI-powered search engines, with a majority of users saying it’s the top digital source they use to make buying decisions.

Key takeaways

– Being “ranked” in the top 10 holds no value

– Being “referenced” is the new search visibility/search optimisation currency

– AI Overviews are not a feature; they are Google’s building block, transitioning traditional search to AI search

How people actually search using AI

Search behaviour in LLMs is fundamentally different from traditional search – not just in format, but in structure and depth.

Search Engine Land shows that AI-driven queries are 2-3x longer than traditional Google searches. While classic search queries typically average 2-4 words, AI search prompts frequently span 10-20+ words, often structured as full questions or multi-sentence instructions.

Users now combine context, intent, constraints, and desired outcomes into one prompt. This makes AI search meaning-first.

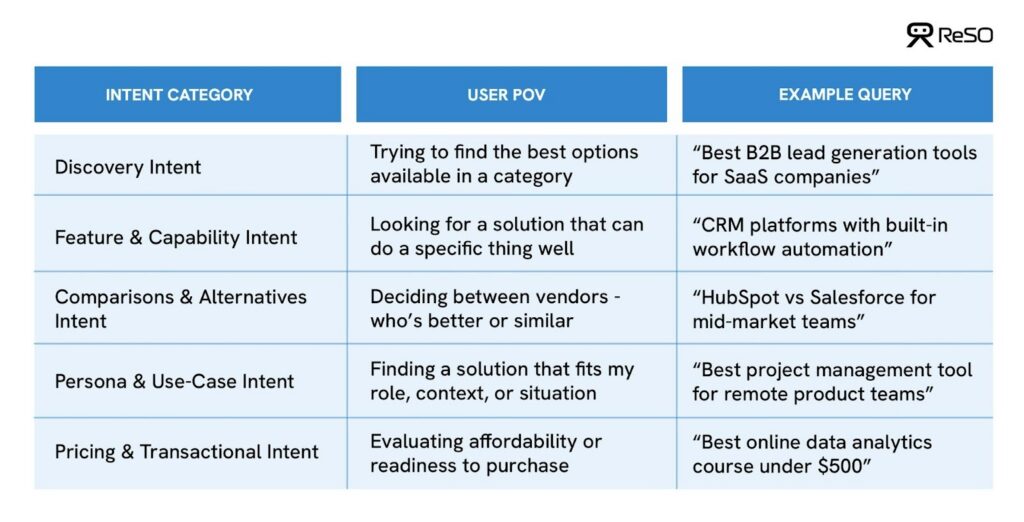

AI search is intent-first, not keyword-first

Intent is the primary signal in AI search. Unlike traditional search engines centered on keywords, LLMs interpret prompts through the lens of why a user is searching, not just what they typed.

Each intent category reflects a distinct decision mindset, and LLMs adapt their responses accordingly. Prompts that naturally lead to brand consideration, which we define as brand-inducing prompts, are extremely relevant for understanding how AI systems surface companies in their answers.

Focusing on brand-inducing prompts is the quickest way to determine your organic search strength against competition for high-intent prospects.

When examined closely, these brand-inducing prompts consistently map back to a set of intent patterns, which can be grouped into five core intent categories.

While these intent categories reflect different buyer mindsets, they also impose different structural requirements on how LLMs construct answers.

Some intents, particularly comparisons, pricing, and use-case-specific queries, cannot be answered without referencing real companies. To generate a credible response, AI systems must:

- introduce named vendors,

- validate claims across multiple external sources,

- and reconcile overlapping or conflicting information.

Other intents, such as broad discovery queries, allow AI models to remain abstract, focusing on concepts, frameworks, or best practices, with minimal or no brand attribution.

For visibility analysis, this distinction is critical.

Rather than treating all prompts equally, we isolated how intent alone influences response depth, citation volume, and source diversity across AI-generated answers. The objective was not to assess ranking or preference, but to understand how often brands are required to appear at all, and how heavily those appearances are supported by citations.

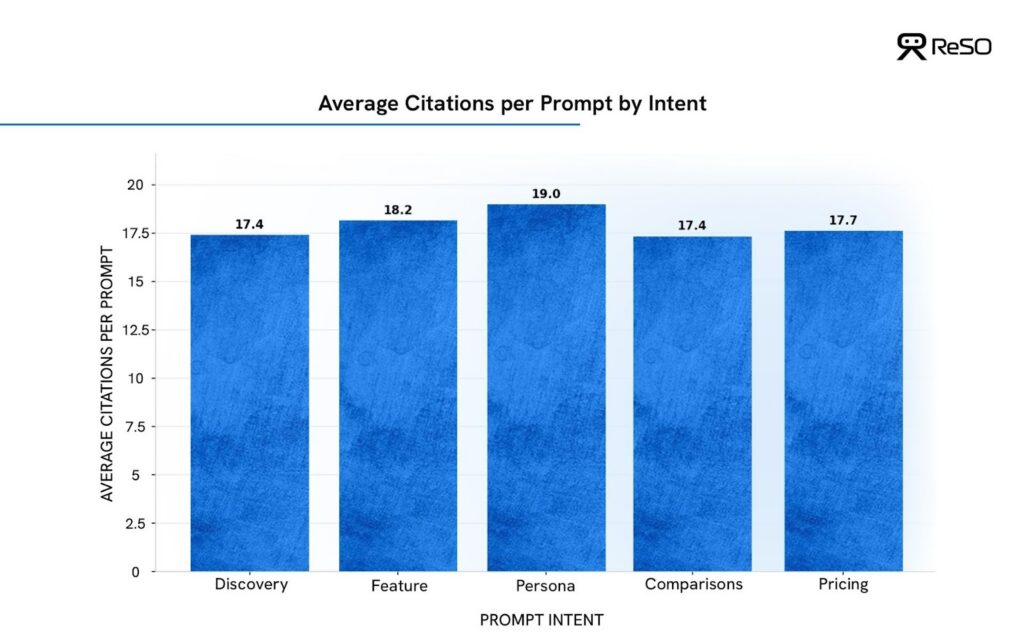

What we infer

- Persona-driven prompts receive the highest citation density, averaging ~19 citations per prompt.

- Feature and pricing prompts follow closely, clustering around ~18 citations.

- Discovery and comparison prompts sit slightly lower, both averaging ~17–17.5 citations per prompt.

The differences are incremental, but the pattern is consistent. This distribution reflects how AI systems adapt their sourcing depth as user intent matures.

| Key takeaways – Keywords describe what; intent explains why, when, where & how users search on AI expecting it to answer for their intent – Brand-inducing prompts are where the real competition begins; aim for these to build a pipeline – If AI can answer without naming vendors, visibility is optional, which explains the massive decrease in traffic on informational keywords across industries |

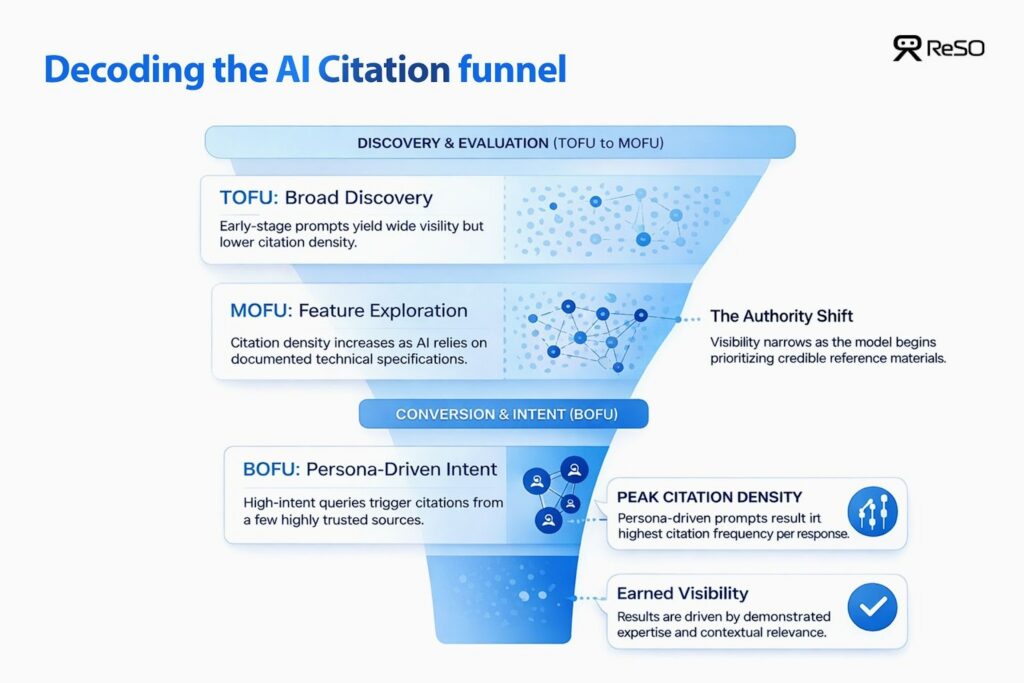

How this maps to the funnel

In short, brands cannot rely on broad awareness content alone. The strongest visibility gains come from ICP-focused TOFU content that compounds authority over time, enabling consistent inclusion as prompts move down the funnel.

AI prefers education over promotion

Across thousands of responses analysed, a clear and consistent pattern emerges: LLMs overwhelmingly prioritise educational and explanatory content over promotional or transactional pages.

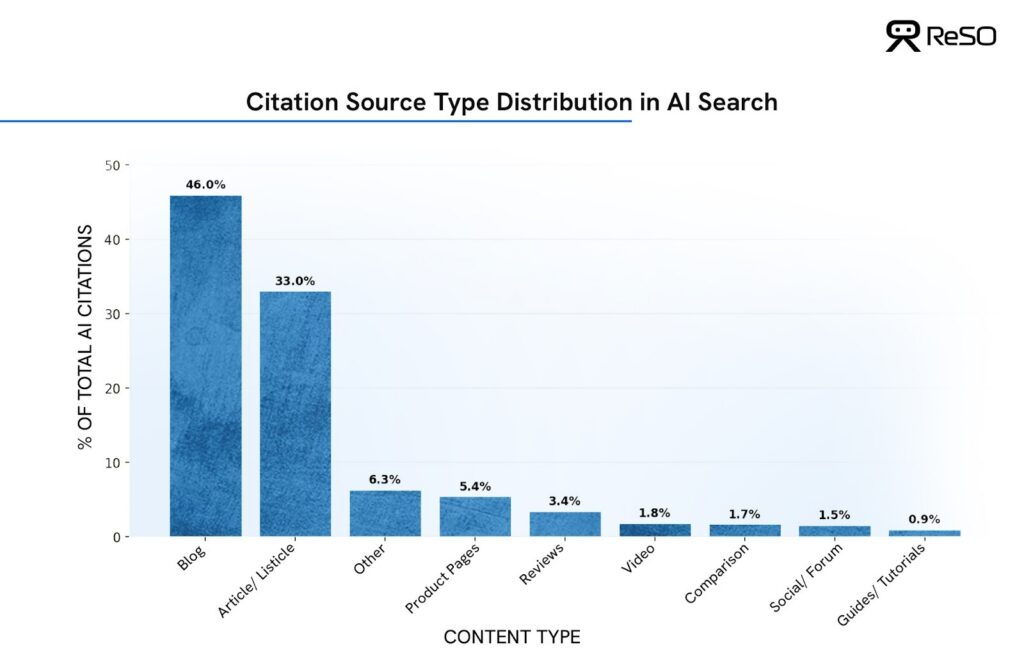

What the data shows:

- Blogs are the single largest citation source, accounting for 46% of all AI citations, making them the dominant visibility layer across AI-generated answers.

- Editorial articles and listicles contribute another 33%, reinforcing that long-form, explanatory content drives the majority of AI search visibility.

- Together, blogs and articles make up nearly 80% of total citations, showing a strong preference for narrative, educational formats over transactional pages.

- Product pages represent a small share at just 5.4%, even when prompts imply evaluation or buying intent.

Why this matters for AI visibility

Being present in AI-generated answers is therefore less about how directly a page sells, and more about how effectively it explains – in the context of what the user (and their ICP) is actually trying to solve.

AI systems respond to intent expressed through context, not keywords. The richer the situational framing, the more selective and precise the recommendations become.

A simple example

Earlier, someone planning a trip might have searched:

“Best waterproof shoes”

Today, that same intent shows up as context:

“I’m going on vacation to London in September, I think it’s supposed to rain, and I’ll be walking a lot. Which shoes should I bring that work with different outfits?”

The second prompt doesn’t ask for products directly. It explains the situation, the constraints, and the decision criteria.

AI systems respond by synthesizing advice – drawing from guides, blogs, and explanatory content that understand why the choice matters, not just what to buy.

The same principle applies to any company. Brands that win visibility are the ones that teach within the ICP’s (Ideal Customer Profile) context, not the ones that push features or pricing the hardest.

Intent shapes visibility, but doesn’t reverse the bias

While AI systems consistently favour educational content, user intent still influences how that preference is expressed.

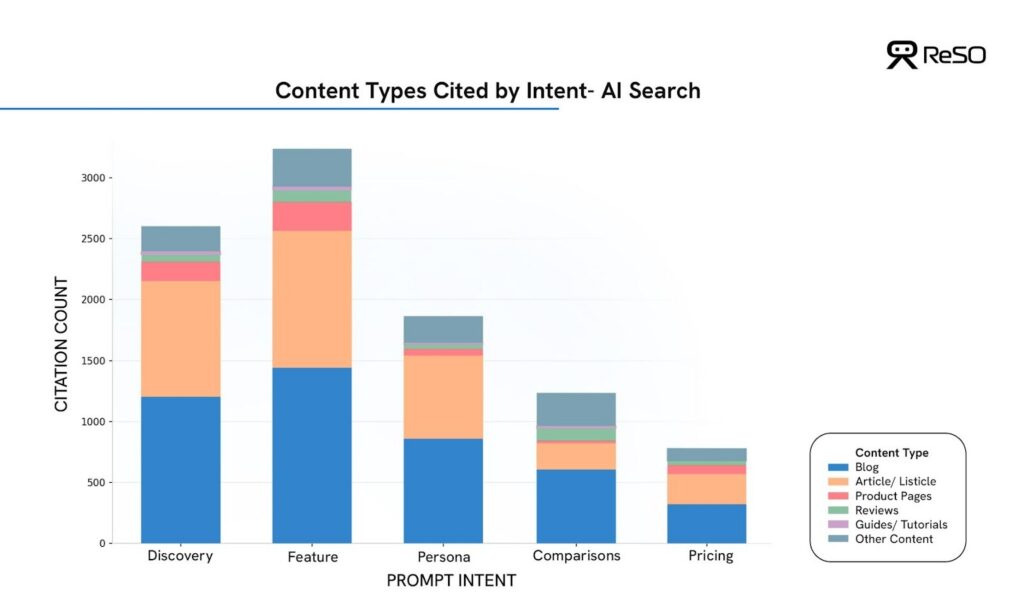

To understand this nuance, citation sources were analysed across different intent categories, including discovery, feature exploration, comparison, persona-based, and user-generated queries.

What changes with intent is the mix, not the underlying bias.

Across early-stage discovery and exploratory prompts, long-form blogs and explanatory articles dominate citations. For comparison and evaluation queries, community content and third-party perspectives increase in prominence, reflecting users’ desire for validation and lived experience. However, even in these end-of-the-funnel stage scenarios, product pages and pricing pages do not become dominant citation sources unless asked by the user specifically.

In other words, intent modulates AI behaviour, but it does not override it.

Educational formats remain LLMs’ favorite type of content across the entire intent spectrum, not because AI systems avoid commercial information, but because they prioritize sources that demonstrate contextual authority before commercial relevance.

Even when a query signals evaluation or purchase consideration, LLMs continue to rely on educational content that:

- explains the problem space clearly,

- reflects real operational experience,

- and is grounded in the specific context of the buyer.

This creates a meaningful opportunity.

For most teams, the highest-leverage path to AI visibility is not late-stage sales content, but TOFU educational assets that are explicitly written for a defined ICP. Content that mirrors how a specific buyer thinks, frames the problem in their language, and references their constraints is far more likely to be surfaced and cited than generic “solution” pages.

When your content helps AI deliver a complete, confident answer, your brand becomes the trusted source for that ICP. This, in turn, makes it far more likely for you to be recommended by LLMs on brand-inducing prompts.

This distinction is where traditional SEO is failing.

| Key takeaways – Blogs and guides are the primary visibility layer. Treat educational content as your discovery engine. – Product pages are supporting evidence. – Pricing pages surface only when explicitly demanded. |

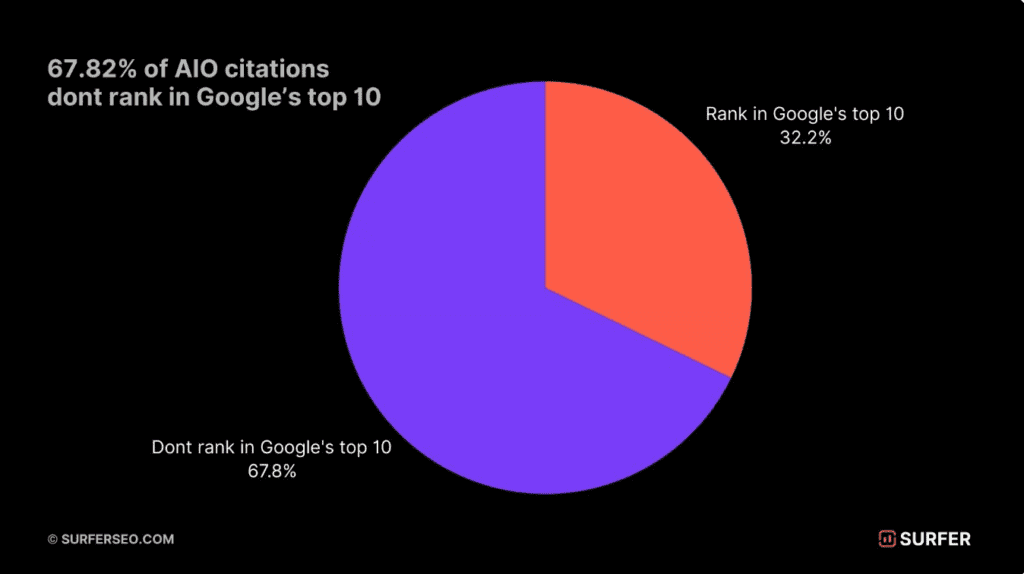

Most AI citations never ranked in traditional search

External research reinforces this pattern.

A large-scale analysis by Surfer SEO found that ~70% of sources cited in Google AI Overviews did not previously rank in the top 10 organic results, and nearly 30% had little to no measurable organic traffic prior to being surfaced.

AI search is not remixing the first page of Google. It is constructing answers based on semantic relevance, contextual completeness, and perceived authority – regardless of historical ranking performance.

This is why traditional keyword-centric optimisation fails to explain AI visibility.

How leaders are rebuilding SEO with AI search

Most leaders agree that AI search is fundamentally changing how discovery works.

What hasn’t changed fast enough is how success is being measured.

When user behavior shifts, old KPIs stop telling the truth. Click-through rate is a clear example. In AI-driven search experiences, CTR has dropped from the historical 3-4% range to well below 1% in many categories. Yet clicks are still treated as the primary signal of performance.

That’s the disconnect.

What we’re seeing in GSC (Google Search Console) as the Crocodile Mouth effect, where users are getting answers without clicking; declining clicks do not mean declining influence. In fact, even though a relatively smaller number of users are clicking and coming from LLM search, they are 3X more likely to convert compared to traditional search.

Hence, instead of looking at the vanity metrics of overall month-on-month incremental volume of clicks, focus on the source of user visit, type of content that is getting cited & core user engagement KPIs like:

- Time spent on site

- Engagement on landing pages

- Pipeline generation from LLMs – product signups, demo bookings

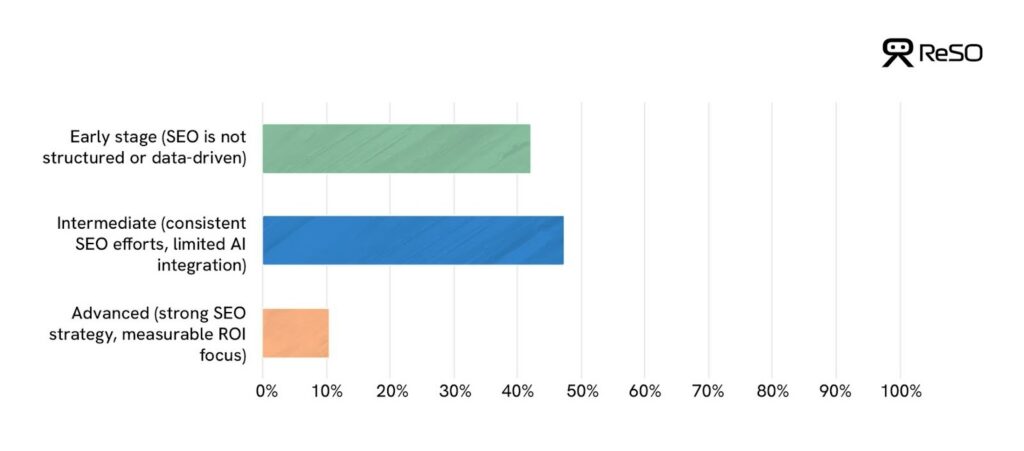

SEO maturity does not equal AI readiness

Most respondents consider themselves experienced or intermediate-level when it comes to SEO.

Most teams do not see themselves as beginners when it comes to SEO.

The majority classify their programs as intermediate or advanced, with only a small minority identifying as early stage. However, this maturity has been built in the old traditional SEO environment.

SEO focus has largely been shaped in a ranking-first, click-driven scope – one optimized around keywords, positions, traffic, and attribution models that assumed the click as the primary outcome. Those skills produced results when discovery flowed through links.

AI search breaks the idea that discovery outcomes are linear.

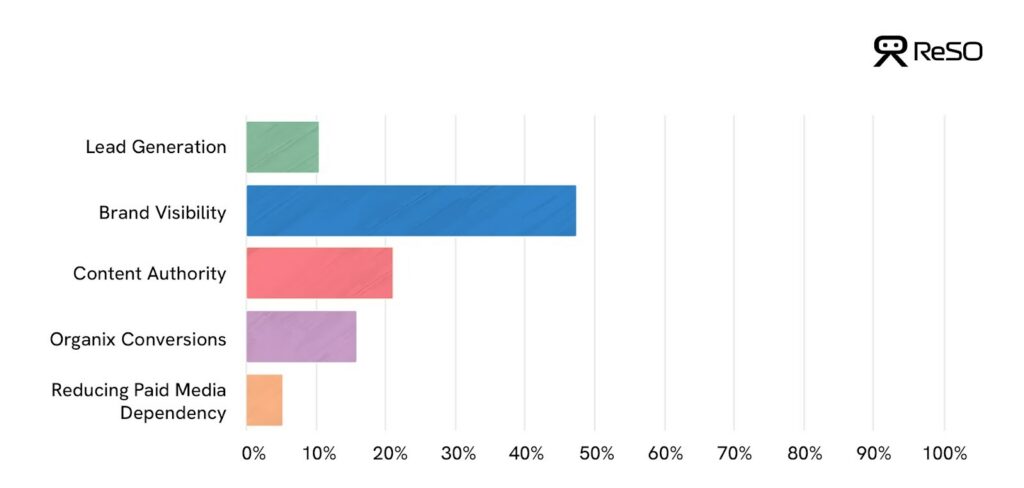

Traditionally, discovery was judged by how quickly it produced leads or conversions. The data shows that this assumption no longer holds. Teams increasingly associate AI search with brand visibility and content authority, not immediate lead capture.

This makes sense. AI systems answer questions directly and shape opinions before a user ever clicks. Discovery influence now happens earlier and more quietly, affecting what brands users remember and trust rather than what they click in the moment.

One signal teams are beginning to notice, but not yet measuring intentionally, is direct brand traffic.

As AI reduces the need to click, branded and direct visits often increase even when non-branded organic traffic falls. Users encounter a brand inside AI-generated answers, then return later by searching for the brand directly or visiting the site intentionally. These behaviors sit outside traditional attribution models, making AI-driven discovery easy to underestimate.

What appears as declining performance through a click-based lens is often a shift in how influence shows up, not a drop in demand.

| Key takeaways – CTR declining does not equal influence declining. – AI-referred users behave differently and stay longer on your website. – Measure depth, not volume. Segment performance by source of discovery, not channel. – Stop forcing AI behavior into old dashboards & KPIs |

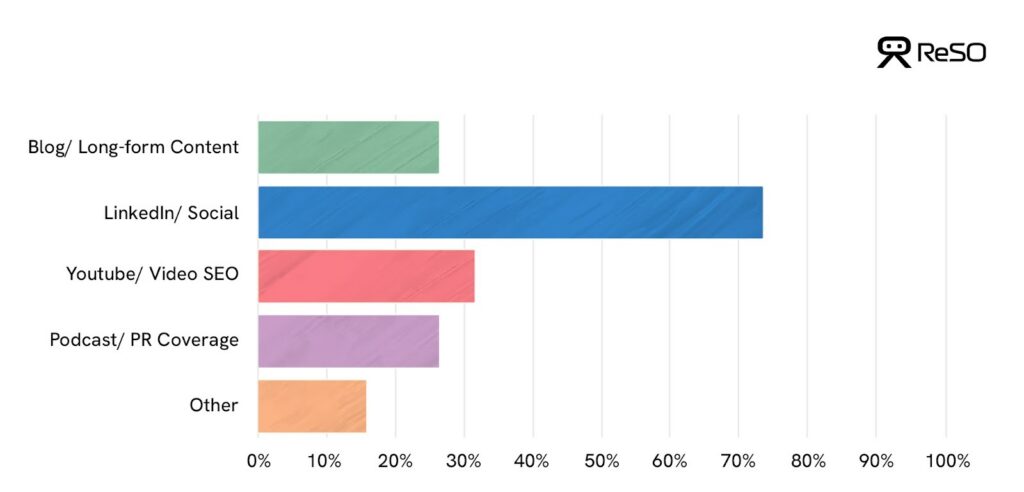

Discovery is still framed as channels, not systems

Leaders also continue to conceptualise discovery through a channel-based lens.

Most teams still organise discovery around channels – SEO, social, video, PR, each with its own goals, owners, and metrics. This structure made sense when users moved predictably from one link to another according to the rank allocated to each landing page on the internet by Google.

Those were simpler times for marketing leaders.

However, content is no longer evaluated in isolation. Blogs, LinkedIn posts, videos, PR mentions, and documentation are pulled together, cross-referenced, and resolved into a single answer. When these channels operate in silos, AI systems receive fragmented signals about what a brand represents.

It is with entity mapping that LLMs process all the information available.

AI systems do not just rank pages. They build an understanding of entities with brands, products, people, and categories, and their relationships. Every channel contributes signals to that entity: consistency of language, clarity of positioning, corroboration across sources, and external validation.

When channels are disconnected, the entity appears weak or ambiguous. When they reinforce each other, AI systems gain confidence in how and when to surface the brand.

| Key takeaways – Every marketing channel should feed the same entity with consistent language to gain AI trust. – Direct and branded traffic are no longer discovery KPIs. – What looks like “lost traffic” is often displaced discovery (attribution mapping needs to change). |

You’re not competing on Google for a rank anymore

You’re competing inside AI systems, each with its own set of unique rules.

Any AI system, whether a search engine, an assistant, or an embedded AI interface, constructs its own view of the world. Some prioritise editorial authority. Others lean more heavily on community validation, product documentation, or real-world usage signals.

This means visibility is no longer limited to getting on Google’s 1st page. It is system-dependent.

Winning search visibility requires an understanding of how different AI systems assemble answers and ensuring your entity is consistently represented across the sources they trust.

Why AI platforms disagree on who to cite

One of the most common assumptions teams make about AI search is that visibility is transferable – that if a brand performs well in one AI engine, it should perform similarly across others.

Our data proves that it’s quite the opposite.

Even when AI platforms are given the same set of prompts, in the same industry, at the same time, they consistently surface different brands, in different volumes, with different levels of concentration. This isn’t noise. Its design.

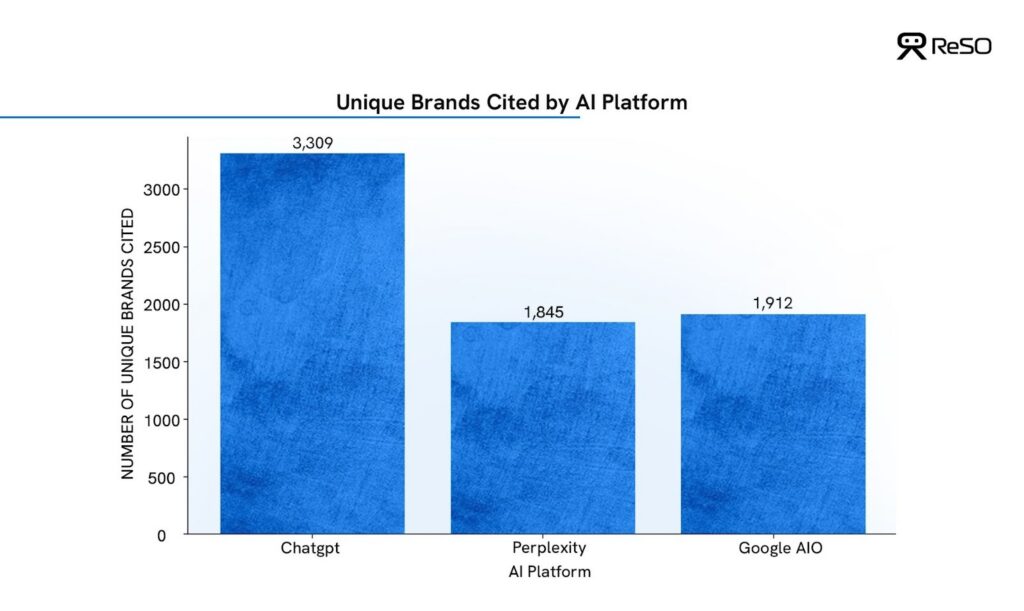

To understand why, we analysed brand citations across ChatGPT, Google AI Overviews, and Perplexity using a controlled prompt set within a single B2B SaaS category. The goal was not to rank brands, but to observe how each platform constructs trust and authority.

Each AI platform has a different trust threshold

The first difference becomes visible at a macro level: how many brands each platform is willing to cite at all.

What the data shows:

- ChatGPT cites the widest universe of brands, surfacing over 3,300 unique brands across the same prompt set

- Google AI Overviews cite a significantly narrower set, with under 2,000 unique brands

- Perplexity is the most selective, citing the smallest pool of brands overall

Each platform applies a different threshold for what qualifies as “reference-worthy.”

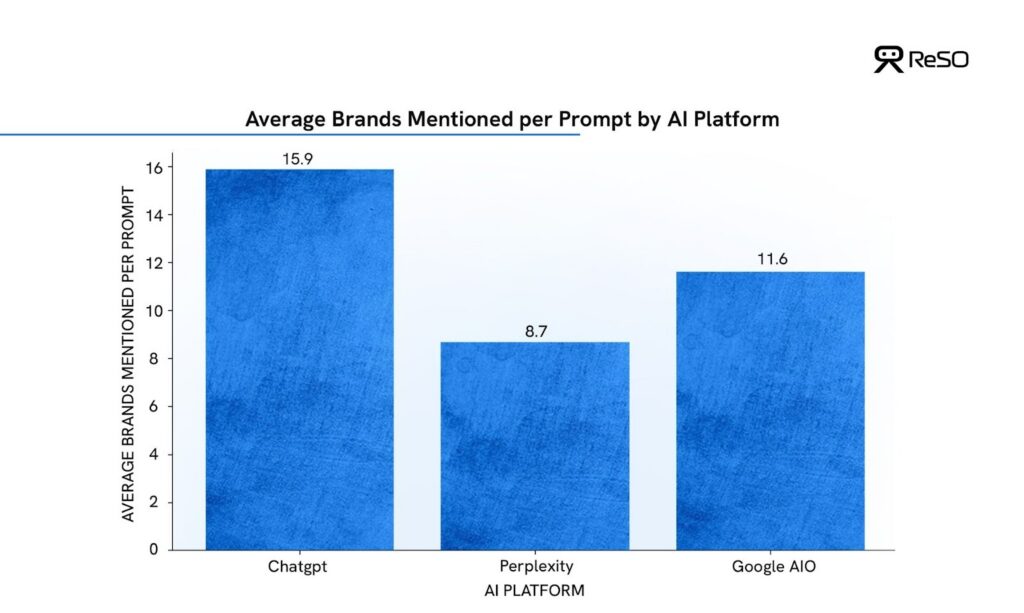

Competitive density varies sharply by platform

Breadth alone doesn’t tell the full story. The second key difference is how crowded each answer set is. In other words, how many brands are typically mentioned per response.

What the data shows:

- ChatGPT mentions the highest number of brands per prompt, averaging ~16 brands

- Google AI Overviews sit in the middle, averaging ~12 brands

- Perplexity is the most constrained, averaging ~9 brands per prompt

Brand Visibility in ChatGPT is more distributed, while brand visibility in Perplexity is more competitive. In practical terms, this means that being cited by Perplexity carries a higher relative concentration of authority, while ChatGPT rewards broader presence.

Same intent, different answers

The most revealing pattern emerges when responses are compared prompt-for-prompt.

Across the controlled analysis:

- The overlap of cited brands across all three platforms was consistently low, reserved only for established enterprise-grade industry leaders

- SMB brands that surfaced prominently in one engine were often absent in others

- No single platform could be treated as a proxy for “AI search” as a whole

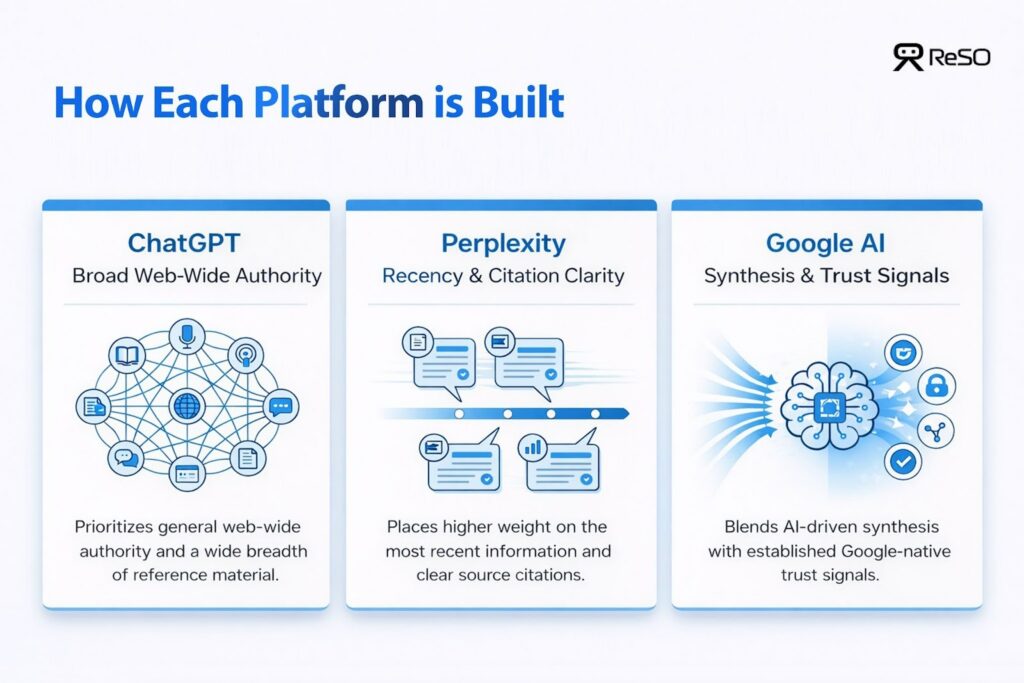

In other words, AI platforms are not converging on a shared definition of authority. They are diverging – by design.

This divergence reflects how each platform is built:

- ChatGPT prioritises web-wide authority and breadth of reference

- Perplexity places greater weight on recency and citation clarity

- Google AI Overviews blend synthesis with Google-native trust signals

Why brands should invest in GEO

Throughout this report, one pattern repeats: visibility in AI search is not decided by whether the page is ranking on Google, but by whether a brand can be confidently referenced when an answer is formed.

AI systems don’t evaluate pages in isolation. They pull signals from content, documentation, third-party sources, and consistent positioning across channels, then assemble those signals into answers. When those signals are fragmented or inconsistent, brand visibility & discovery drop, regardless of traditional SEO performance.

This gap is real and can be solved only by a structured AISO approach.

How to build visibility in AI search

The building blocks have not changed. Content, expertise, authority, and technical foundations still matter. What has changed is how they need to work together.

SEO was built for stacking: pages competing for position. AI search rewards assembly: signals reinforcing each other across contexts. Here’s how you can build that coherence:

| Actionables – Designing content to be referenced, not clicked – Making explanations stand alone, so individual sections remain credible when extracted – Aligning language and claims across blogs, documentation, PR, and third-party sources – Reinforcing a clear entity identity across all discovery surfaces – Optimising for intent patterns, not isolated keywords – Measuring success through presence, citation, and consistency, not traffic alone |

We also have an exhaustive 100-item checklist (that we also use within ReSO) covering every important aspect of a strong Generative Engine Optimisation(GEO) strategy.